On the surface, public bug bounty programs look like a no-brainer. You invite a number of security researchers to find security issues in your application and you only pay for valid results. Who would say no to that? However, as we explore below, for many organizations launching a public bug bounty program is not the best idea. It’s like storming the castle before gathering systematic intelligence and planning strategic attacks.

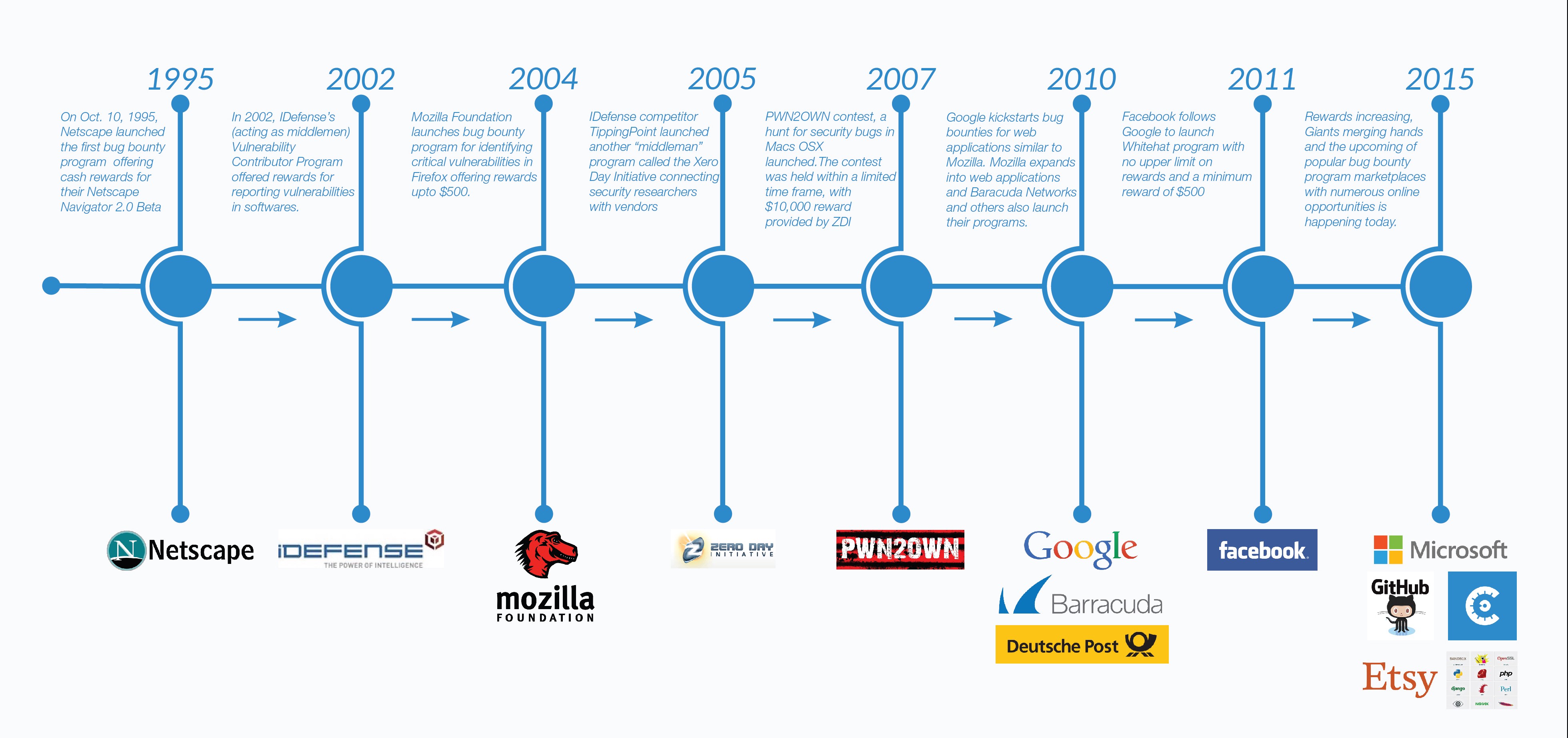

It’s been 20 years since Netscape pioneered the first bug bounty program. Since then, many have pointed to the value of bug bounty programs. Leading software companies such as Google and Facebook have all praised their public bug bounty programs. Bug bounty platforms have advocated for launching such programs as well.

At Cobalt, we have worked with organizations to launch more than 200 bug bounty programs. We have learned that there are significant management costs required to run a public bug bounty program. This means that for most organizations, establishing a public bug bounty program is often too expensive compared to the results. Instead, the ROI for organizations to crowdsource other security services, such as vulnerability assessments and penetration tests, is much higher — and is a better first choice.

The hidden costs of public bug bounties

By the nature of a public bug bounty program the entire world can participate. Often, many of the participants are novice researchers who are eager to submit reports. As a result, a significant number of duplicate and low criticality reports are being submitted. This causes unintended overhead costs which are often overlooked in public bug bounty programs:

-

Low signal to noise — Public bug bounty programs have a low signal-to-noise ratio. For example, in Facebook and Google’s independent bug bounty programs only 4% and 5% of the reports submitted actually end up with a reward. This means that more than 90% of submissions are duplicates or invalid reports. On bug bounty platforms the signal-to-noise is slightly better, but it’s still a significant challenge. The low signal-to-noise might be acceptable for Google, but for others this causes a bug-alert overload which diverts scarce attention in the wrong direction.

-

Significant management needed — Public bug bounty programs require dedicated engineers to manage the communication between the researcher community and internal development teams. The more external researchers involved, the more coordination and communication are required. For a medium-sized organization one should expect to dedicate one fourth of an engineer’s time to manage the incoming reports. For enterprises, full-time dedicated engineers are required. This means that on top of paying for bounties, whether done in-house or outsourced, one can expect to spend an additional $25,000 — $250,000 per year just to manage the incoming submissions from a public bounty program.

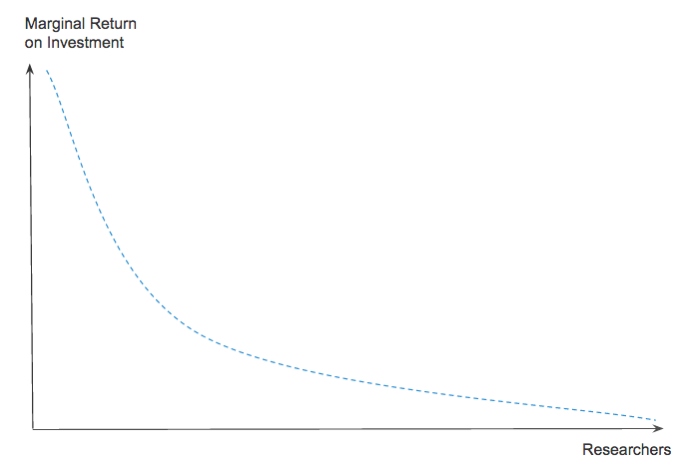

The low signal-to-noise and the required dedicated engineers drive up the overall cost. Simply put, the more researchers involved the more duplicates, management, and invalid reports as well. This means that the marginal value diminishes as more and more researchers get involved.

If an organization has budget constraints this relationship is crucial. It means that most organizations should start by working with fewer selected researchers before diving into the long tail of security researchers. Hence, for many organizations, a public bug bounty program is not a good first choice.

From a security researcher perspective the low signal-to-noise in public bug bounties can be a painful situation as well. Security researchers invest significant time in finding, documenting, submitting, and following up on reports where the majority end up as declined/duplicates. Instead of “storming the castle” if one can improve the matching of researchers to the security task at hand, significant improvements can be for the researchers as well.

Rewiring bug bounties

The challenge around public bug bounties has spurred an immense amount of innovation. For example, we have seen the emergence of private bug bounty programs, curated researchers, innovative reputation systems, automatic detection of duplicates, and recommendation systems.

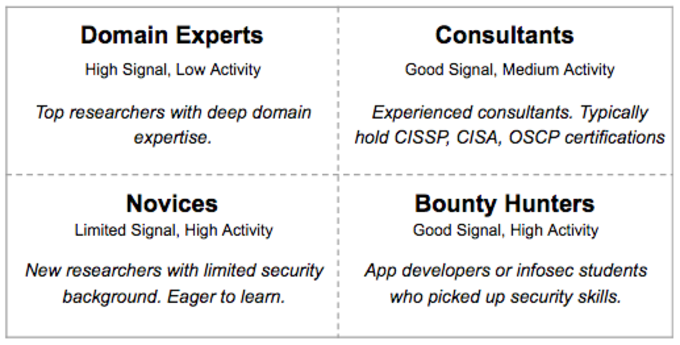

To dig deeper into crowdsourced security one must take a closer look at the overall researcher community and understand the diversity. In general, we don’t believe that people fit in “a box”; however, to structure the thinking we find the below framework useful.

Security Researchers

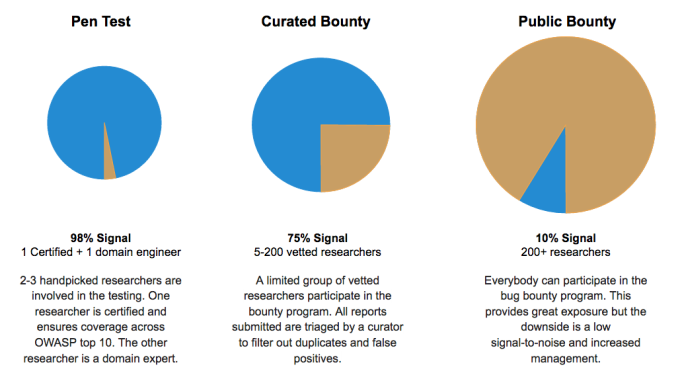

Being able to leverage different skill sets and personalities is the essence of what makes crowdsourcing strong. In reality, public bug bounties is just one specific type of crowdsourcing an organization can choose out of a range of different possibilities. Due to industry development and being able to match specific researcher profiles into targeted security programs, we now have several different approaches which can fit into a secure software development lifecycle. We think of these as three main categories:

Variations in Crowdsourced Application Security

So which path to pick?

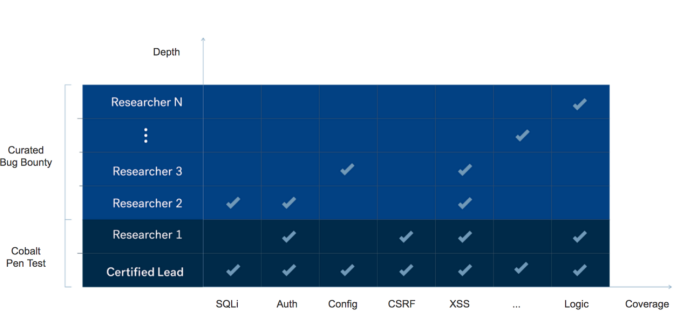

The pen test typically includes coverage via a crowdsourced vulnerability assessment and also an in depth penetration test. The goal is to ensure that you have been covered for OWASP Top 10 and SANS Top 25 attacks and standard business logic flaws. We think of this as getting the basics covered. Starting here helps you catch low hanging bugs while having a very limited number of false-positives.

A curated private bug bounty can be thought of as an ongoing crowdsourced penetration test with more depth than a more periodic pen test. Also, there is typically a curator filtering and triaging the reports from the researchers before they land in the inbox of the organization. The signal to noise ratio on curated programs is slightly lower than the pen test and depends on how strict the curation and filtering is, combined with the number of researchers involved. The difference between a private curated bug bounty and a public one is the number of researchers involved and the degree to which the researchers have been vetted and proven.

The goal of an organization is to increase product security as much as possible given their budget, then which is the best approach? Due to the overhead costs of a public bug bounty we find that starting with a focused assessment and pen test is a much more cost-effective way to find security vulnerabilities. However, there is a limit to the number of bugs that can be found when only few researchers are involved. Therefore once the basics have been covered it make sense to go the next level and expand the researcher pool.

Vulnerability Assessment, Pen Test and Bug Bounty

When establishing your crowdsourced security program we recommend the following approach:

-

Start with a vulnerability assessment and crowdsourced pen test.

-

Expand your testing via a curated private bounty program.

-

Grow the security program to include more researchers. Consider making it public.

For example, if you have an application where you don’t regularly do security assessments and penetration tests, the best approach is to start small with a crowdsourced vulnerability assessment and penetration test where you leverage high quality researchers periodically in a structured manner. By following this approach your return on investment will be higher than if you start with a public bug bounty with unstructured, continuous testing by many researchers.

The choice is yours

We strongly believe in leveraging the cumulative knowledge of the security community. The trend kickstarted by public bug bounty programs has fundamental implications for the entire application security industry. It might be that a public bug bounty program is not the right starting point for your organization. However, collaborating with a distributed team of security researchers is definitely a good idea.

We therefore advocate all organizations to work with external security researchers, but encourage you to be smart about choosing which approach is the right one for you — and protect your castle wisely.

Thanks!

Thanks to David Sopas, Devdatta Akhawe, and Robert Fly for having read drafts of this post and providing valuable feedback and comments.

Check out this post also about the future of application security if you are interested in learning more.

Additional Reading